Robots, drones or vehicles need artificial intelligence based on reliable data to achieve high levels of dependable autonomy. Medical service providers and space exploration need mass volumes of data analysed and assessed to draw correct conclusions from future data. Platforms handling small business microtasks have to annotate huge amounts of paperwork for machines to process it. An increasingly common solution is to parcel out the work to a crowdsourced team of willing helpers who benefit from an income boost. Data annotation platforms can vet crowdsourced applicants who want to carry out tasks, monitor their on-going performance to ensure a quality on-demand service, and match the right people with particular tasks. It’s in every stakeholder’s best interest, and is a core benefit of crowdsourcing over outsourcing.

What is data annotation?

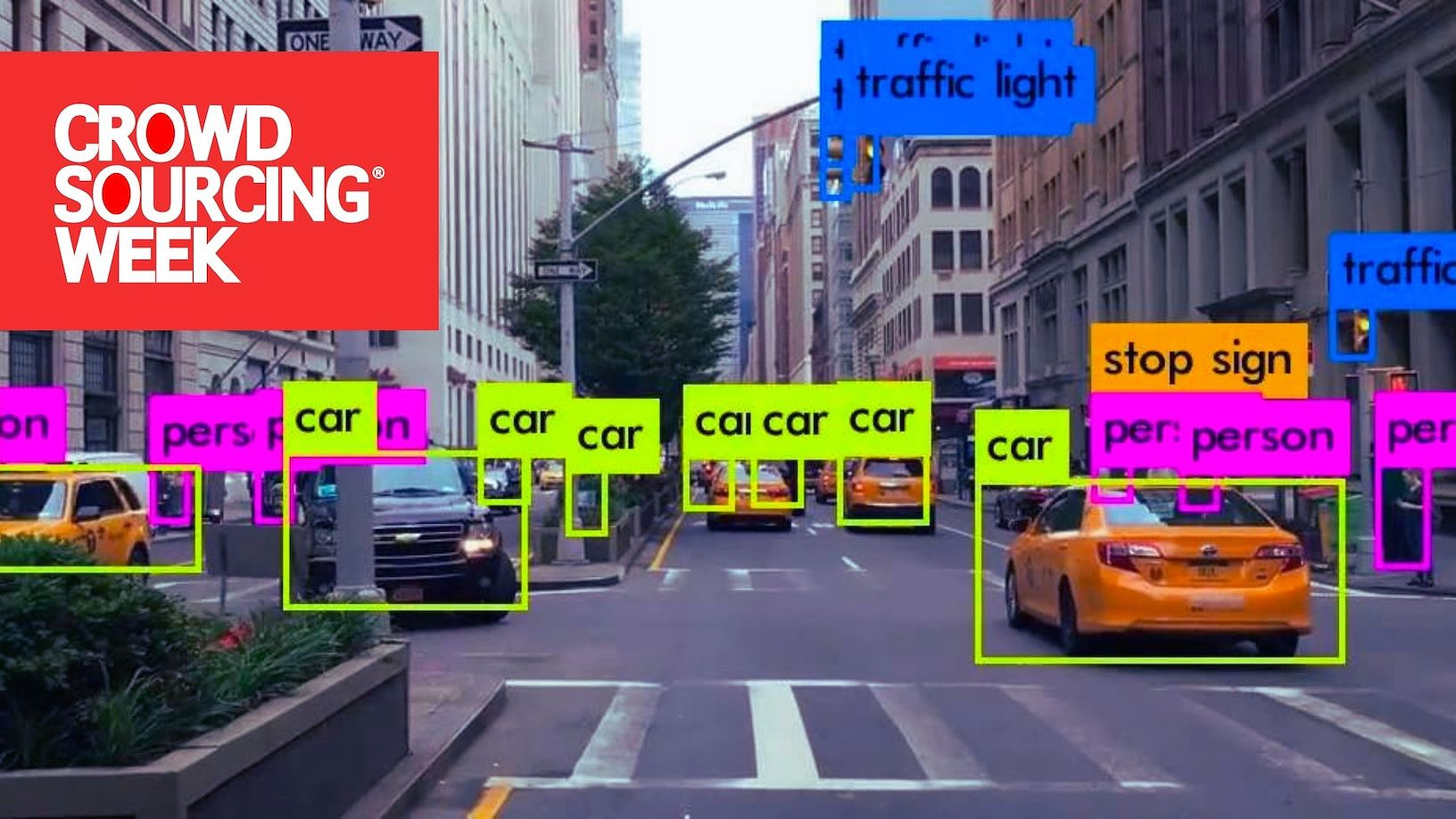

Data annotation is the technique through which we label data so that machines can categorise and recognise objects for AI applications. Data includes text, figures, images and videos. It is also time-consuming and tedious work.

Training data must be properly organized and annotated for a specific use case. With high-quality, human-powered data annotation, companies can build and improve AI implementations.

There is a very thin line difference between data annotation and data labelling, except the style and type of content tagging that is used. Quite often they are used interchangeably to create ML training data sets, depending on the AI model and the process of training the algorithms. This article covers crowdsourced providers of both.

The importance of reliable data annotation

Self-driving cars must be able to identify everything they might encounter on the road. Mistakes could be fatal.

Image source: Drone Ag

Drones used in precision agriculture have to be trained to identify poorly growing crops so that farmers can adjust applications of fertilizer, water, or pesticide before an entire harvest is lost. They must identify the difference between healthy and not so healthy fruits, vegetables and crops, which can vary widely in shape and in a range of varying conditions.

In healthcare, the creation of algorithms is about utilizing existing databases which mainly encompass imaging files, CT or MR scans, samples used in pathology, etc. At the same time, data annotation involves drawing lines around tumors, pinpointing cells or designating ECG rhythm strips. Thousands of them. People have to do it first for machines to be trained to then do it on a larger scale.

Studying the cosmos is like watching history, given that what we see today can be light that has travelled billions of years to be visible from Earth. As telescopes develop and more and more of space becomes visible, human analysis of existing images creates the basis of machine learning to power accurate AI scanning of new ones.

Here is a look at five platforms that utilise crowdsourced annotators.

Amazon Mechanical Turk (MTurk)

MTurk lets companies tap into a vast talent pool of over half a million microtaskers to complete Human Intelligence Tasks, or HITs. The workers are primarily in the US, though an overall global distribution means that between them they can provide a round-the-clock service. Firms, or other “Requesters,” can use MTurk  to hire individual “regular workers” to help them complete batches of specific tasks for their machine learning projects. It is an ideal fit for small-scale projects with simple jobs like labelling images or transcribing text such as on receipts. Larger tasks should be broken down to a set of smaller, more manageable microtasks that can each be accomplished independently. These relatively simple tasks can then be completed quickly and cheaply, and the workers can fit in microtasking whenever other responsibilities and obligations allow.

to hire individual “regular workers” to help them complete batches of specific tasks for their machine learning projects. It is an ideal fit for small-scale projects with simple jobs like labelling images or transcribing text such as on receipts. Larger tasks should be broken down to a set of smaller, more manageable microtasks that can each be accomplished independently. These relatively simple tasks can then be completed quickly and cheaply, and the workers can fit in microtasking whenever other responsibilities and obligations allow.

Worker performances have to be checked and are graded by the requestors. Workers develop a track record that can steer them to the optimum tasks for their skills. As well as “regular” workers there is also a better qualified (and more expensive) grade of “master” workers. These particular “Mturkers” have usually spent at least twice as much time working on MTurk, and have completed over seven times the average number of assignments. Setting qualifications offers requestors a power mechanism to control which workers are allowed to view and work on their tasks.

Overall median pay per hour is low, with the higher paying tasks often snapped up by a minority of the more experienced workers. Those more experienced workers will have also been able to identify requestors who are less likely to dismiss work as not being properly completed, and thus hold back payment. However, despite wrangles over quality of work and payments, Mechanical Turk remains a source of income for people unable to attend a workplace due to factors including incapacity, carer responsibilities, or remoteness.

Toloka

For companies: Toloka is a crowdsourcing platform and microtasking project launched by Yandex (the Russian search engine and web portal) in 2014. It can quickly markup large amounts of data, which are then used for machine learning and improving search algorithms. The proposed tasks are usually very simple so as to not require any special training from the task performer.

For task performers: Toloka is an app for earning money online without requiring any personal investment. Performers can choose tasks, complete them online or offline when it’s convenient for them, and get rewarded. Anyone can earn money in Toloka — no special knowledge is required. Tasks are simple and they don’t need any particular skills or experience to complete them, beyond using the internet.

For task performers: Toloka is an app for earning money online without requiring any personal investment. Performers can choose tasks, complete them online or offline when it’s convenient for them, and get rewarded. Anyone can earn money in Toloka — no special knowledge is required. Tasks are simple and they don’t need any particular skills or experience to complete them, beyond using the internet.

ScaleHub

Rather than take on more staff, increasing numbers of organisations use shared services centres and business process outsourcers for the on-demand execution and handling of specific operational tasks, such as accounting, human resources, payroll, IT, legal, compliance, purchasing, and security.

The SSCs and BPOs, including the members of the International Association of Outsourcing Professionals, Amazon Web Services, and IBM Global Business Services, do the ‘front office’ work of finding customers. ScaleHub focuses on providing them with cloud-based ‘back office’ access to crowdsourced communities around the world who will perform the required tasks. It does this through its back-end global network of data annotation and labelling service providers which includes DYNAMIX, which operates across Europe; A;gusth which is their partner in south east Asia; and Feith Systems which is ScaleHub’s US partner. ScaleHub provides its back-end delivery partners with technology.

The SSCs and BPOs, including the members of the International Association of Outsourcing Professionals, Amazon Web Services, and IBM Global Business Services, do the ‘front office’ work of finding customers. ScaleHub focuses on providing them with cloud-based ‘back office’ access to crowdsourced communities around the world who will perform the required tasks. It does this through its back-end global network of data annotation and labelling service providers which includes DYNAMIX, which operates across Europe; A;gusth which is their partner in south east Asia; and Feith Systems which is ScaleHub’s US partner. ScaleHub provides its back-end delivery partners with technology.

A recent addition to ScaleHubs’ portfolio of BPOs is UPsource, based in Rwanda and able to provide a pan-African service through connecting young African professionals to digital work opportunities.

Neevo

Neevo has a crowd of over 500,000 global contributors to its Automatic Speech Recognition datasets. All anyone needs is a device to connect to the internet, some spare time, and a Paypal account to receive payment. Simple tasks include annotating text, audio, images or even video, or turning text in to spoken words. And then to ensure accurate use, the spoken word data is itself then transcribed and annotated using related words or phrases so that it is used in a correct manner. Neevo has found that using different crowds to speak, transcribe and then annotate increases the level of data accuracy.

Neevo is the spoken word data capture arm of Defined.ai, a provider of high-quality speech data and a wider infrastructure of solutions for training artificial intelligence, all focused on making AI smarter. Defined.ai’s high-quality data is available in a variety of delivery options, including off-the-shelf data and customized collection, and in over 50 languages to help global AI initiatives drive business goals. In addition to the number of languages, Neevo prides itself on providing data sets covering regional accents and dialects, plus data provided by people who are not speaking in their native tongue. This improves the efficiency level of the AI training.

Neevo is the spoken word data capture arm of Defined.ai, a provider of high-quality speech data and a wider infrastructure of solutions for training artificial intelligence, all focused on making AI smarter. Defined.ai’s high-quality data is available in a variety of delivery options, including off-the-shelf data and customized collection, and in over 50 languages to help global AI initiatives drive business goals. In addition to the number of languages, Neevo prides itself on providing data sets covering regional accents and dialects, plus data provided by people who are not speaking in their native tongue. This improves the efficiency level of the AI training.

Defined.ai’s head office is in Seattle, Washington State, US; other offices are in Lisbon and Porto in Portugal, and Tokyo in Japan.

Appen

Appen also provides high-quality speech data sets for clients to confidently train and deploy world-class AI. Remote work is changing how the world does business, and Appen is a sector pioneer. They help their clients  enhance best-in-class speech-operated products and services around the world, including search engines, social media platforms, voice recognition systems, sentiment analysis, and eCommerce sites.

enhance best-in-class speech-operated products and services around the world, including search engines, social media platforms, voice recognition systems, sentiment analysis, and eCommerce sites.

They do this from their base in Sydney, Australia, through tapping in to their crowd of more than one million people to help clients meet the ever-changing needs of their customers through employing international diversity and flexibility. Annotators are readily available 24/7 for simple microtask annotations that don’t require a particular skill set, or custom crowds of skilled annotators can be recruited for specific task ASR datasets. For work involving sensitive or confidential information, specially identified and certified annotators can be located at one of Appen’s secure facilities to focus on the task.

One more we want to mention

CONNECTED Women is a social impact tech start-up, which offers online skills development and remote work opportunities to Filipino women. Their pool of over 8,000 remote workers includes under-privileged women they have helped up-skill, who would otherwise struggle to find work due to their distance from suitable jobs, family commitments or lack of jobs available. It also includes women re-skilling or transitioning from corporate jobs to remote working as virtual assistants or data annotators.

BOLD Awards III

Remote Working is one of 20 categories in the annual BOLD Awards for digital industries, now in its third year. The aim is to source and share stories of individuals, companies and organizations – large and small – from all over the world who are managing crowd-related projects and initiatives in a way that really powers breakthroughs. If that includes you, then enter NOW!

A round of public voting in January 2022 will shortlist projects that go before an international judging panel, and winners will be announced and receive their award at a gala dinner ceremony hosted by the innovation hub H-FARM at their campus in Venice, Italy. Would you like to be there on April 22, 2022?

0 Comments